Serving Websites Using S3 and CloudFront’s Default Root Object

A Better Solution! A couple of years after this blog post was written, Amazon added features to S3/Route 53 to allow static sites with or without the “www” name, so you don’t need to follow the below rather complicated routine. See their blog post on the matter for full details and a walkthrough of the process in the AWS Console.

Warning: this post has a very high geek factor. I wouldn’t read this one unless you’re familiar with both Amazon S3, and with DNS, and interested in setting up a Default Root Object in CloudFront (which lets you serve a website from S3 without needing a filename on the end of the URL.)

Note:

S3 now (since February 2011) allows you to configure a default document for your S3 folders, which means you don’t need the CloudFront workaround for default root objects described as part of this blog entry. See Amazon’s blog entry for the announcement. I figured I’d leave this entry up, as it still shows you how to host a website entirely in S3 and how to use Amazon’s CloudFront CDN to serve it up efficiently across the planet, so it’s mostly still useful information. Thanks to Eric Pecoraro for pointing this out in the comments!

Introduction

Last year I’d idly wondered about hosting an entire website on Amazon S3. It’s pretty cheap, and you only pay for what you use. There’s no fixed monthly fee, unlike most hosting plans. It can serve files directly to the web quite easily, and you can point your own domains at it.

Obviously, as S3 is just storage, you can’t use any server-side code. You can’t stick a WordPress install there, for example, because S3 just serves files; it doesn’t have a PHP interpreter. But it can be used for any kind of static, non-interactive website, or one where the interactivity is limited to Javascript.

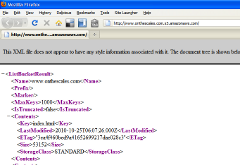

Turned out the main fly in the ointment was that S3 doesn’t have the concept of a default file to serve. That is, if I were to host www.example.com on S3, and someone visited it using “http://www.example.com”, the S3 server – unlike normal web servers – doesn’t look for an “index.html”, or whatever. Instead, S3 serves up either a listing of the underlying S3 bucket, in XML format, or a 403 Forbidden if your bucket isn’t world-readable. You can see what it looks like in your browser by going to my basic S3 bucket address here.

Turned out the main fly in the ointment was that S3 doesn’t have the concept of a default file to serve. That is, if I were to host www.example.com on S3, and someone visited it using “http://www.example.com”, the S3 server – unlike normal web servers – doesn’t look for an “index.html”, or whatever. Instead, S3 serves up either a listing of the underlying S3 bucket, in XML format, or a 403 Forbidden if your bucket isn’t world-readable. You can see what it looks like in your browser by going to my basic S3 bucket address here.

If you want to send people to your S3-based content, therefore, you’ll either need your own traditional web server to wrap your S3 content, or always provide them with a filename on the end of the URL you give them, e.g. http://www.example.com/index.html. This isn’t always ideal.

Enter the recent Cloudfront announcement:

“Amazon CloudFront, the easy to use content delivery network, now supports the ability to assign a default root object to your HTTP or HTTPS distribution. This default object will be served when Amazon CloudFront receives a request for the root of your distribution…”

CloudFront isn’t S3, but it is Amazon’s own content delivery system, and it delivers the content straight from S3’s buckets. So, you can now use CloudFront to solve the default file problem – put your index.html file into your S3 bucket, and use CloudFront to serve it as the default file when someone visits your site.

In this article, I’ll go through how I created a simple website in an S3 bucket, then started serving it out under my own domain name.

Expected Result

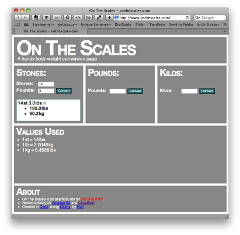

I created a simple website for converting body weights that solves a particular problem of mine – most weight conversion sites don’t bother with the peculiarly British system of stones and pounds1 that I grew up with. It’s a one-page site with some CSS and Javascript support files. I wanted to serve it at the address http://www.onthescales.com.

I created a simple website for converting body weights that solves a particular problem of mine – most weight conversion sites don’t bother with the peculiarly British system of stones and pounds1 that I grew up with. It’s a one-page site with some CSS and Javascript support files. I wanted to serve it at the address http://www.onthescales.com.

Into The Bucket

First, I put my website in a bucket. I fired up Transmit and created a (North American area) bucket called “www.onthescales.com”. Then I threw my website’s files in it, including the main page, “index.html”. I made the bucket and all the files “world readable” in S3. (Any S3 client, for example the S3Fox Firefox extension, should let you do this.)

Because of the way S3 interacts with web requests, you can see the website if you point your browser at http://www.onthescales.com.s3.amazonaws.com/index.html. The S3 servers take everything before the “s3.amazonaws.com” as a bucket name, then find the named file on the path provided, in this case “index.html”.

To make things friendlier, I took the domain name that I owned, “www.onthescales.com” and created a DNS CNAME record for it that pointed it at “s3.amazonaws.com”. Now, http://www.onthescales.com/index.html worked – I could see the website there.

Unfortunately, this is as far as you can get using S3 alone. If you were to go to http://www.onthescales.com with this setup, all you’d see would be that list of the files in the bucket. So, if I wanted to tell everyone about my website, I’d still have to make sure they included “index.html” on the end of the URL: http://www.onthescales.com/index.html. Not very friendly.

CloudFront to the Rescue

Enter CloudFront. First off, I needed to start serving my files using CloudFront pointing at my existing S3 bucket.

I followed the “Migrating from Amazon S3 to CloudFront” instructions in the Amazon CloudFront Developer Guide:

First I signed up for CloudFront. If you already have an S3 account, it’s just a couple of clicks, and a few seconds waiting for the “you’re signed up” email.

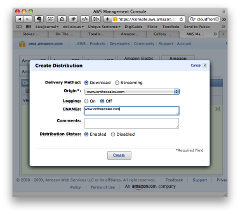

Then, I signed in to the AWS management console, and headed for the CloudFront section. As I’d not done anything with CloudFront before, I only had one option: Create Distribution. A Distribution is the basic object in CloudFront. A Distribution is configured to serve up an S3 bucket in a particular way.

The Create Distribution prompted me for the S3 bucket I wanted to serve through CloudFront. I chose www.onthescales.com, and also told it the CNAME I wanted to use (the same value in this case; www.onthescales.com.) I hit “Create”.

The Create Distribution prompted me for the S3 bucket I wanted to serve through CloudFront. I chose www.onthescales.com, and also told it the CNAME I wanted to use (the same value in this case; www.onthescales.com.) I hit “Create”.

When you make changes in CloudFront, it takes a while to filter through. The status of your Distribution in the Console will be “InProgress” while stuff is still going on. My Distribution held at “InProgress” for five or ten minutes before changing to “Deployed”. (Just hit the “Refresh” button every now and again to check.)

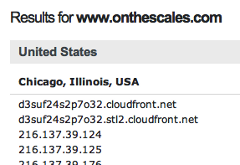

Each Distribution is assigned a DNS name. I could see from the console that my new distribution was “d3suf24s2p7o32.cloudfront.net”. Were my S3 objects being served there? I went to http://d3suf24s2p7o32.cloudfront.net/index.html, and lo and behold! There was my website.

So, the next stage was to re-point my domain name at the CloudFront distribution. I fired up my domain provider’s DNS panel and changed the CNAME record for www.onthescales.com to point it at d3suf24s2p7o32.cloudfront.net instead of s3.amazonaws.com.

Happily, I use OpenDNS’s DNS servers, so rather than waiting for the change in the CNAME record to trickle through I just went and prodded their CacheCheck service, and could see that “www.onthescales.com” was now pointing at CloudFront.

Happily, I use OpenDNS’s DNS servers, so rather than waiting for the change in the CNAME record to trickle through I just went and prodded their CacheCheck service, and could see that “www.onthescales.com” was now pointing at CloudFront.

Now, while it’s impressive that my tiny website is now being delivered by an international CDN, at this point nothing’s actually changed for the end-user. You’d still have to go to http://www.onthescales.com/index.html to see the site.

Default Root Object

That’s where CloudFront’s Default Root Object comes in. All I needed to do was set my Distribution’s Default Root Object to “index.html” and I’d be done.

Unfortunately, it’s surprisingly difficult to set the Default Root Object. The “standard” way is to send an authenticated POST request to update your distribution’s configuration, as described in the Developer Guide, under “Creating a Default Root Object” section under “Working with Objects”.

But that’s just too damn complicated, and actually requires some programming. Was there an easier way?

The AWS Management Console doesn’t do the job, sadly. And Panic’s Transmit, my tranditional Mac FTP and S3 client, doesn’t seem to have much in the way of CloudFront options.

Luckily, Transmit’s free, open source competitor Cyberduck just seems to be getting better and better, and gives you a nice simple interface to this slightly esoteric bit of CloudFront. (Anyone know if there’s an option for Windows or Linux users? Cyberduck seems to be in private beta for Windows at the moment, at least, so maybe there’s some hope there…)

EDIT: Andy from CloudBerry Lab says that CloudBerry Explorer Freeware has this capability under Windows, so that’s worth a look.

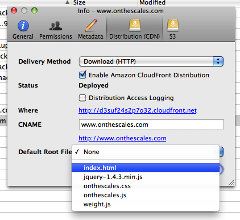

In Cyberduck, I connected to my S3 account, then right-clicked on my “www.onthescales.com” bucket and chose “Info”. Then I went to the “Distribution (CDN)” tab and chose “index.html” from the drop-down list that appeared under “Default Root File”. Voilà! Vastly easier than fiddling with authenticated POST requests and XML files yourself. Thank you, Cyberduck! Worth a donation, I reckon.

In Cyberduck, I connected to my S3 account, then right-clicked on my “www.onthescales.com” bucket and chose “Info”. Then I went to the “Distribution (CDN)” tab and chose “index.html” from the drop-down list that appeared under “Default Root File”. Voilà! Vastly easier than fiddling with authenticated POST requests and XML files yourself. Thank you, Cyberduck! Worth a donation, I reckon.

However, http://www.onthescales.com/ still just gave me a raw text listing of the bucket contents. I headed into my AWS console to see what I could see. Aha! The “State” was back to “InProgress” rather than “Deployed”.

I went to have a cup of tea. When I came back, the State of my distribution was “Deployed” again. And to http://www.onthescales.com/ now showed me my wesite. Mission accomplished!

The Unfortunately Hacky Icing on the Cake

The final icing on the cake was to get the non-www version – http://onthescales.com – working. Unfortunately, I couldn’t get this to work using S3 and CloudFront alone.

As discussed in this ServerFault answer and the Amazon discussion thread it points to, it’s hard to map the non-WWW version of a domain name to CloudFront, because you can’t CNAME a root name (such as “onthescales.com”) in DNS. This is one of the more irritating limitations of DNS.

Also, you can’t do the normal workaround of using an A record pointing at the destination server’s IP address, because the whole point of the internationally-distributed, load-balancing CloudFront system is that your stuff is on multiple servers, allocated dynamically depending on your visitor’s whereabouts, so pointing your records at a single IP address would be rather dumb.

So, for the non-WWW address, there’s no clean AWS-only solution. The best I could do was to rent some cheap traditional hosting space, and point onthescales.com at that server’s IP address using an A record rather than a CNAME. Then just make that a 301 redirect to www.onthescales.com (I used a .htaccess file.)

It’s a bit nasty, but as far as I can tell, it’s the only way to ensure that the non-WWW version of a site will wind up pointing at CloudFront. At least it should be a fairly minimal expense.

And that’s the final solution that I used. If you go to http://www.onthescales.com, only CloudFront and S3 are involved. You’ll be sent to a CloudFront server that will serve local-to-you cached copies of the files in my www.onthescales.com S3 bucket. Hackily, though, if you go to http://onthescales.com, you’ll hit some cheap GoDaddy hosting that’ll 301-redirect you to http://www.onthescales.com, where CloudFront will take over.

Conclusion

Even with the slightly hacky ending, I think this was still an interesting experiment. If you have a pretty static website, with no server code, especially one with lots of big content – media files, say – then you can definitely now use S3 and CloudFront to do all the hosting and delivery for you, and not bother with setting up your own web server, whether it’s traditional hosting or an Amazon EC2 instance. You can serve directly from your S3 bucket, and it should be very fast, very reliable, and relatively cheap, depending on how much you’re serving.

- There are fourteen pounds to a stone, and I’m rubbish at mental arithmetic, especially when I have to work out remainders when converting back to the British weirdness. ↩

20 Comments

Andy, CloudBerry Lab

October 28, 2010CloudBerry Explorer freeware is an option for Windows users. It comes with full support for S3 and CloudFront, namely for http and streaming distributions, private content, object invalidation and root object setup

http://s3.cloudberrylab.com/

Matt

October 28, 2010Hi Andy,

Thanks for that; I’ve updated the post to link to CloudBerry.

Matt

JJ Geewax

October 31, 2010For the last hacky bit, if you’re using ZoneEdit for DNS records, they have a “WebForward” type, where you can say “http://yoursite.com” redirects to “http://www.yoursite.com”. Works great for me.

Thanks for the article.

Richard

November 8, 2010Hi, I’m just easing myself into EC2 & S3 and although I doubt this would be of use for my clients dynamic site it’s certainly an interesting concept and end result. I enjoyed reading it (It’s rare I read a full blog post I just happen to stumble on).

Richard.

Tom

December 6, 2010Hi Matt

Really useful article, thank you. Hope you don’t mind me asking, but what kind of price are you being billed for doing it this way?

Thanks

Matt

December 6, 2010Hi Tom,

Sorry, I can’t really help with pricing. Because I don’t use much storage or bandwidth, and I don’t actually use any compute power (EC2, etc) I don’t get charged enough for it to really register. Last month my entire Amazon Web Services bill was $1.49, including serving most of the embedded images and videos on this blog.

Amazon have a calculator for estimating pricing, if you know how much storage/bandwidth you’re likely to use…

Michael Burns

December 13, 2010What a good idea for hosting.

For the $1.49 how much monthly bandwidth did you use Matt?

Also, I wonder if there is a way to show custom 404 error pages, possibly in future I guess.

Matt

December 18, 2010Hi Michael!

My $1.49 was made up of:

…plus tax.

Paul

December 17, 2010And this is why the world went metric.

1,000 grams in a kilogram. It’s easy.

Eric P

January 18, 2011The hacky icing on the cake is not necessary (with Namecheap.com as a registrar).

Click on over to http://namecheap.com. The control panel enables you to assign a CNAME to the root “@” as well as to WWW or any other sub-domain.

Don’t ask me why this works (perhaps they do some magic in the background). But it DOES indeed work. I tested it with your solution and it works flawlessly.

I created two CF distributions from the same bucket:

AWS CF, VIA Cyberduck:

BUCKET: mydomain.com.s3.amazonaws.com => CNAME: domain.com

BUCKET: mydomain.com.s3.amazonaws.com => CNAME: http://www.domain.com

Namecheap.com:

@ => d3lgou1sc8ujbe.cloudfront.net (root)

www => d3lgoy22c8ujbe.cloudfront.net (sub-domain)

(Above entries are not the real cloudfront.net addresses. I used the them like you described …after showing “deployed” in the AWS CF UI.)

Thanks for the great article!

Eric Pecoraro

January 19, 2011Update and corrections to my earlier post about serving DNS root and WWW from Namecheap.com for static HTML websites.

After some trial & error (too much of both), I determined that the correct way to serve both “www” and root is as follows:

1. Create one S3 bucket (doesn’t matter the physical name) and put Web files (and folders) there.

2. Create ONE (and only one) CloudFront Distribution (“Origin Bucket” from step 1. above) with TWO CNAMEs (both sub domain WWW and root), separated by a return as follows:

domain.com

www .domain.com (No space after www. It’s here to prevent this form making this example into a hyper-link)

I added the CNAMEs in the AWS control panel. In Cyberduck you can add two CNAMES space separator. I assume that will work, but I didn’t try.

3. With Cyberduck, assign “Default Root File” (as index.htm in my case). This is not possible in the AWS control panel.

IMPORTANT NOTE: Must wait for the CloudFront Distribution to be in “Deployed” status before applying the “Default Root File”.

4. In the Namecheap.com DNS control panel (“All Host Records” section) link both “www” and root to the resulting CloudFront “Domain Name” as follows:

@ => x2glou1sc8ujbe.cloudfront.net (root)

www => x2lgou1sc8ujbe.cloudfront.net (sub-domain)

I suppose you could do this with registrars other than Namecheap.com, if applying CNAME to root “@” is available. I didn’t try any other registrar since all my domains are there.

This serves the Website (both root and www) correctly with no hacks whatever. Mucho credit/props to Matt for the original post.

EP

Matt

January 19, 2011Hi EP,

Thanks for that! I’m curious as to what kind of records namecheap.com is creating there, as the CNAME problem isn’t a limitation with particular providers; it’s a limition with DNS itself.

Are you sure this is providing CNAME records? Can you see what “dig A domain.com” returns?

Thanks,

Matt

Eric Pecoraro

January 19, 2011Hi Matt,

Using the excellent tool set at MxToolBox.com (http://www.mxtoolbox.com/CNAMELookup.aspx) CNAME lookup output is below.

Please note, I’ve altered the actual CloudFront Domain Name and real domain name here to protect our client’s privacy. Other than that, it’s real data.

I’m not sure how Namecheap.com (which uses of Enom, Inc.‘s system) is doing it, but it’s working, and the root shows as a CNAME record.

Auth Type Domain Name Canonical Name TTLN CNAME www.domain.com d3jcbjp4ga9gc6.cloudfront.net 1 min

Auth Type Domain Name IP Address TTL

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.29

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.88

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.97

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.147

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.182

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.202

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.235

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.244

N CNAME domain.com d3jcbjp4ga9gc6.cloudfront.net 1 min

Auth Type Domain Name IP Address TTL

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.33 1 min

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.37 1 min

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.50 1 min

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.96 1 min

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.115 1 min

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.126 1 min

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.195 1 min

N A d3jcbjp4ga9gc6.cloudfront.net 216.137.43.241 1 min

Eric

Eric Pecoraro

January 20, 2011Another update (WARNING) regarding CNAME records applied to root at Namecheap.com.

It seems that (for reasons unknown) that when a CNAME is applied to root, it COMPLETELY DISABLES the MX records for the domain.

Unfortunately it took me 1.5 days to figure this out…during which time no mail was delivered for the domain in question.

It appears, regarding this issue, that you can’t have your cake and eat it too .

Matt

January 20, 2011Hi Eric,

Having done some digging, if you’ve got a CNAME record, you can’t have any other records (e.g. MX) for that “node” (name), according to the RFC — see this article, which I got to from this answer on ServerFault.

It seems from this discussion that that implies you can’t CNAME a root name because you at least have to have a SOA record as well.

In the case of MX records, I think what’s probably happening is that your MX records and your CNAME are being interpreted as pointing your mail at the CloudFront servers, which is why it’s not being delivered (presumably they don’t even run SMTP.) I’m guessing if you could see one of the bounce messages, it would either be a DNS error or a delivery timeout after repeated attempts to connect to a nonexistent mail server on CloudFront…

Sadly, therefore, I stand by my original “you can’t CNAME a root name” claim, even though some servers obviously allow you to shoot yourself in the foot by trying it… Thanks for reporting back; hopefully people will find this warning useful!

Matt

Eric Pecoraro

January 21, 2011Agreed.

Even though it’s doable via Namecheap.com’s DNS controls, it’s a bad hack (as it breaks RFC). I’d rather our team control the traffic via a legal hack, via 301 redirect. Or, if the URI/request needs to be included (for historical purposes), .htaccess which would reroute the entire URL string (on *nix).

Eric Pecoraro

February 18, 2011Some new, and interesting news from Amazon on hosting a Website on s3:

http://aws.typepad.com/aws/2011/02/host-your-static-website-on-amazon-s3.html

http://docs.amazonwebservices.com/AmazonS3/latest/dev/index.html?WebsiteHosting.html

The root issue is still a problem, but now there is true indexing (ALL urls ending in “/” route to index files, not just the root index as with CDN), and additional 404 page capabilities.

So, the CDN/index workaround is no longer needed.

Matt

April 16, 2011Heya Eric!

Sorry it’s taken me so long to reply; I’ve been a bit rubbish this winter, and it’s been compounded a bit by illness (nothing terrible, just serial colds and flu, damn it!)

Yeah, I spotted that blog entry, too, which made me very happy. I’ll update the article, and maybe I’ll post a new blog entry about simple hosting with *just* S3…

As always, thanks for commenting!

David

July 13, 2011Has anyone found a way to use Route53 to avoid CNAMEs..? I have heard rumor that AWS is looking at enabling Route53 to route apex domain request to a S3 bucket or Cloudfront distribution. This would be great as you could register a domain with Route53 [AWS’s DNS] and point it to S3 without using 301s / CNAMEs.

Lauri

December 29, 2011Wwwizer has a free HTTP redirection service.

You can use that one for the domain.com –> http://www.domain.com redirection hack. Its free and its working. Even Werner Vogels is using it.